Posted 07.04.2020

By Luke Pickering

There are a lot of key components when it comes to SEO, however, one that is very often overlooked is crawl budget. Many of you will have heard about it but don’t really know that you can, in fact, have some control over it. For most people, you don’t immediately need to worry about it, however, if you run a large-scale website, crawl budget is something you should be optimizing for SEO success. In 2017 Google announced that crawling itself was not a ranking factor, however, it is important for SEO. Let’s start at the beginning, understanding crawl budget…

Put simply, crawl budget is the number of times a search engine spider hits your website on any given day. There isn’t a limit as to the number of and frequency of the crawlers but there are a number of factors that will influence them.

Well logically, you should be wanting the search engine spiders to be discovering as many of your site’s important pages as possible. Also, if you’re putting out new content on your website then by increasing your crawl budget, the quicker crawlers will be able to find and index your new content.

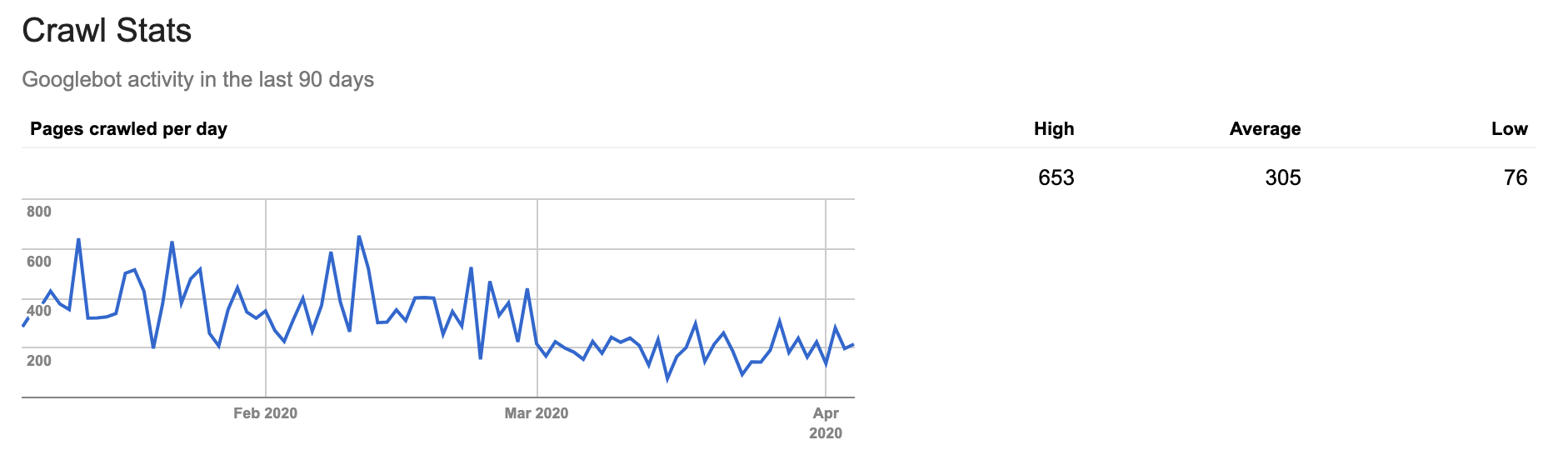

You can get an understanding of your site’s crawl budget in Google Search Console, the data provided is only general, but it is essential. On your Search Console account if you go to ‘crawl’ then to ‘crawl stats’ it will show you your high, low and average number of pages crawled per day your monthly crawl budget is your average multiplied by 30.

We don’t know exactly how search engines form crawl budget for websites, but according to Google there are two factors that determine crawl budget:

This one is a no-brainer but a very important first step, you can either do this by hand or using a website auditor tool. All you need to do is add robots.txt to allow or block the crawling of any page on your domain in seconds, you’ll just have to upload the edited document and now Google will be able to crawl your document.

This is a normal website health maintenance check you should be doing anyway, but ideally, you would be avoiding having even a single redirect chain anywhere on your site. For a large website, it’s pretty much impossible that you won’t have any, however, you have to do your best at minimising the number of them. The reason you don’t want big redirect chains is because it’s possible that a search engine crawler might stop crawling and never actually get to the page you want to be indexed. One or two redirects is probably not going to damage your site, but it is something you might want to take care of.

Now admittedly if you’re on Google, then they’re better at crawling JavaScript and Flash, but other search engines aren’t quite at that stage yet. Currently, every search engine can read HTML, this means that you’re not hurting your chances with any crawler. We suggest that you use HTML to not miss out on any potential crawlers.

It’s really important that you’re taking care with, and regularly updating your XML sitemap. The bots will have a much easier and better time understanding where your internal links lead. You should also be making sure that it corresponds to the newest uploaded version of robots.txt. We would recommend tools such as Yoast – which is a popular SEO plugin for WordPress that will update your sitemap automatically or you can do it using free tools such as Screaming Frog

Crawl budget optimization is key for best practice SEO. Hopefully, these tips will help you to optimize your crawl budget and improve your SEO performance. If you’re wanting some help getting started don’t hesitate to get in touch with us.

Need help with your web design or digital marketing?

Talk to an expert today or call us on 01332 493766

Part of The Digital Maze Group